Concept of Schedule in DBMS Tutorial Study Material Notes with Example

Schedule

A schedule (or history) is a model to describe execution of transactions running in the system. When multiple transactions are executing concurrently in an interleaved fashion, then the order of execution of operations from the various transactions is known as a schedule or we can say that a schedule is a sequence of read, write, abort and corm.: operations from a set of transactions.

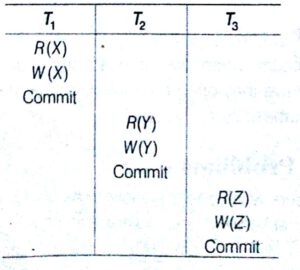

The following is an example of a schedule

The above Schedule consist of three transactions T1, T2 and T3. The first Transaction T1 reads and writes to object X and then commits. Then, T2 reads and writes to object Y and commits and finally ; reads and writes to Object Z and commit

Classification of Schedules Based on Serializability

The schedules can be classified as

Serial Schedule

A schedule in which the different transactions are not interleaved (i.e., transactions are executed from start to finish one-by-one).

Complete Schedule

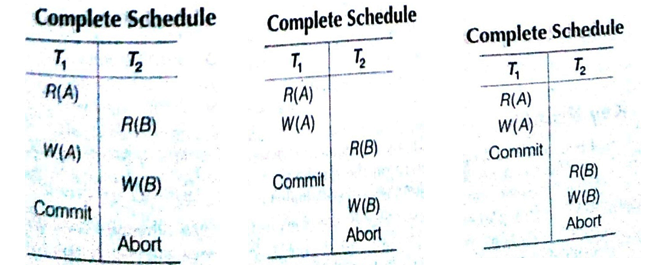

A schedule that contains either a commit or an abort action for each transaction.

Note: Consequently, a complete schedule wilt not contain any active transaction at the end of the schedule.

Non-serial Schedules

Non-serial schedules are interleaved schedules and these schedules improve performance of system (i.e., throughput and response concurrence or interleaving operations in schedules might lead the database to an inconsistent state.

We need to seek to identify schedules that are

- As fast as interleaved schedules.

- As consistent as serial schedules.

Conflicting Operations: When two or more transactions in a schedule execute concurrently, then there may be some operations.

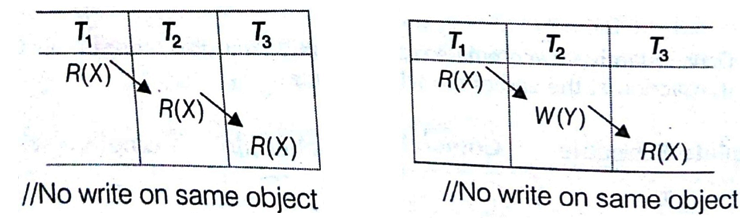

Two operations are said to be conflicting, if they satisfy all of the following conditions.

- The operations belong to different transactions.

- Atleast one of the operations is a write operation.

- The operations access the same object or item.

The following set of operations is conflicting

While the following sets of operations are not conflicting

Key Points

- If two conflicting operations are applied in different orders in two schedules, the effect can be different on the database or on other transactions in the schedule, hence the schedules are not conflict equivalent.

- In view equivalence respective transactions in the two schedules read and write the same data values while in conflict equivalence, respective in two two schedules have the same order of conflicting operations.

Serializable Schedule

A schedule S Of n transactions is serialzable. if it is, equivalent to some Serial schedule of the same n transaction,

A non-serial schedule S is equivalent to saying that it is correct, because it is equivalent to a serial schedule.

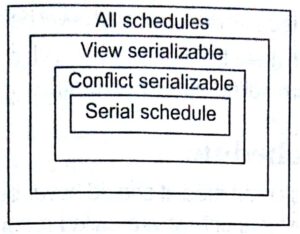

There are Two types of serializable schedule

- Conflict serializable schedule

- View serializable schedule

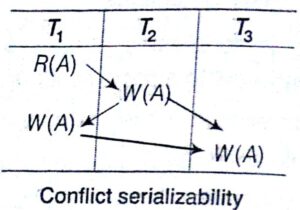

Conflict Serializable Schedule

when the schedule (S) is conflict equivalent to some serial schedule (S’) , then that schedule is called as conflict serializable schedule. In such a case, we can reorder the non-conflicting operations in S until we form the equivalent serial schedule S’.

Conflict Equivalence

The schedules S1 and S2 are said to be conflict equivalent, if the following conditions are satisfied

- Both schedules S1 and S2 involve the same set of transactions (including ordering of operations within each transaction).

- The order of each pair of conflicting actions in S1 and S2 are the same.

Testing for Conflict Serializability of a Schedule

There is a simple algorithm that can be used to test a schedule for conflict serializability, This algorithm constructs a precedence graph (or serialization graph), which is a directed graph.

A Precedence e graph for a schedule S contains.

- A node for each committed transaction in

- An edge from T to Tj if an action of Ti precedes and conflicts With one of Tj‘s operations.

A schedule S is conflict serializable if and only if its precedence graphs is acyclic.

Note: The above example of schedule is not conflict serializable schedule. However, in general, several serial schedules can be equivalent to any serializable schedule S, if the precedence graph for S has no cycle and if the precedent: graph has a cycle, it is easy to show that we cannot create any equivalent serial schedule, so S is not serializable.

– Key Points………………………………………………………………………..

- Serial schedule is sic ever but guarantees consistency (correctness).

- Every serial schedule is a*serializable schedule but not every serializable schedule is serial sch

- Being serializable includes that

The schedule is a correct schedule, if

It will leave the database in a consistent state and the interleaving is appropriate and I will result in a state as if the transaction were serially executed.

A schedule is a efficient (interleaved) schedule.

View Serializable Schedule

A schedule is view serializable, if it is view equivalent to some serial schedule.

conflict serializable => View serializable, but not vice-versa.

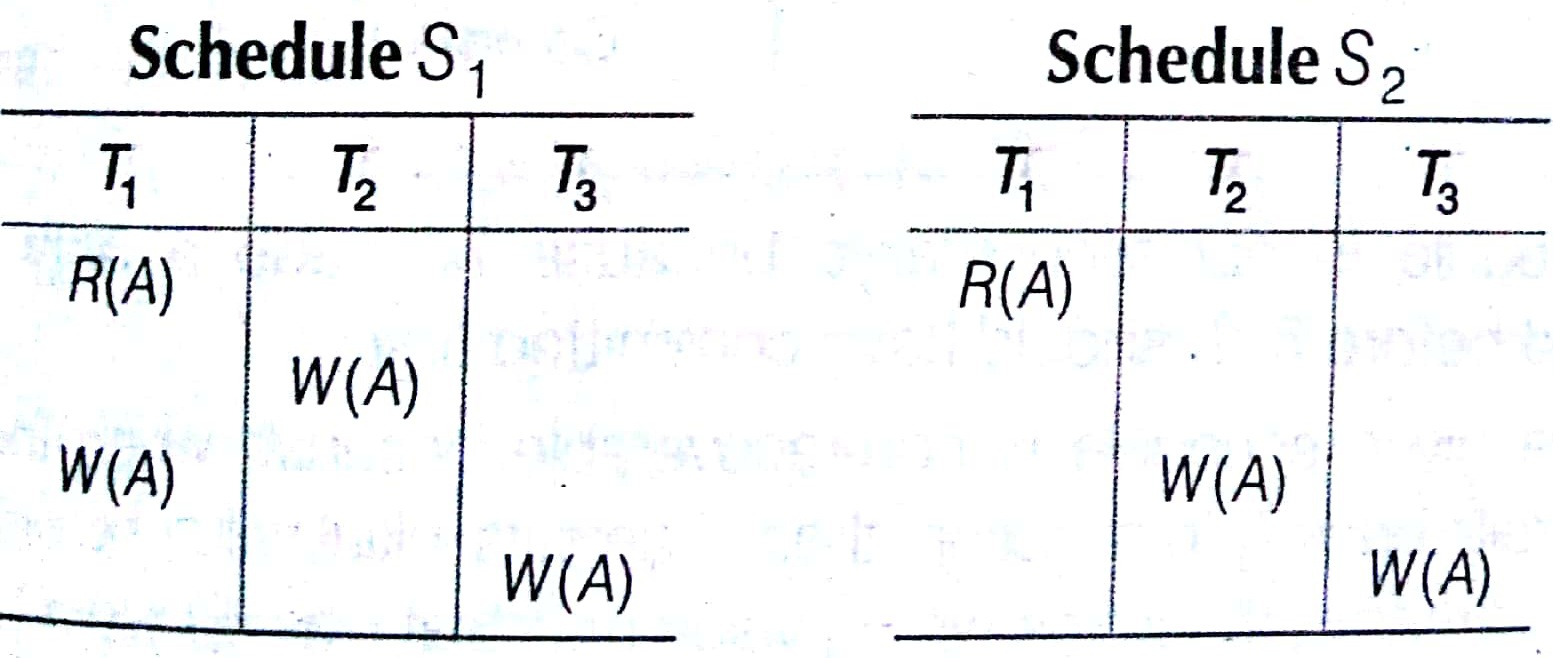

View Equivalence

Two schedules S1 and S2 are view equivalent,

(i)Tj reads initial value of database object A in schedule Si, then Ti also reads initial value of database object A in schedule S2.

(ii) Ti reads value of A written by Ti in schedule Si, then 7; also reads value of A written by Ti in schedule S2.

- Tj writes final value of A in schedule Si, then Tj also writes final value of A in S2.

In the above example, both schedules S1 and S2 are view serializable schedules. But Si schedule is not conflict serializable schedule and S2 is not conflict serializable schedule because cycle is not formed.

Classification of Schedules Based on Recoverability

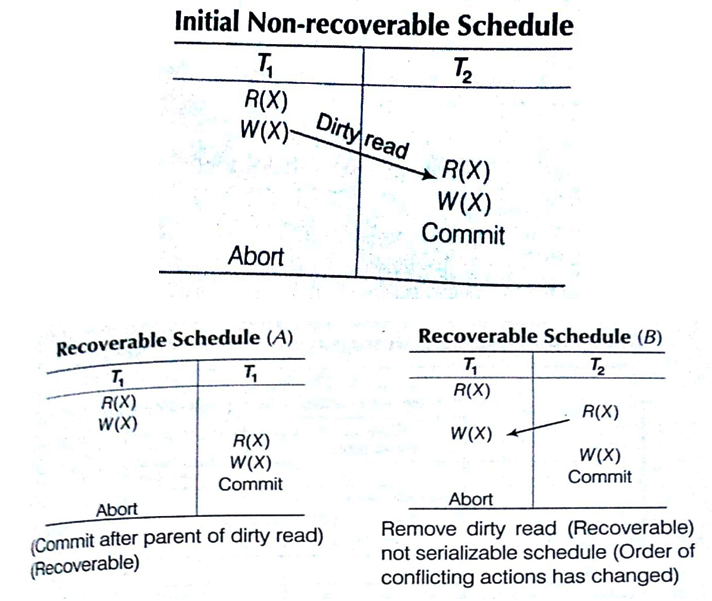

- In recoverability, there is need toad dress the effect of transaction failures on concurrently running transactions.

- Once a transaction T is committed, it should never be necessary to rollbacks T.

- The schedules those meet this criterion are called recoverable schedules and those do not, are called non-recoverable.

Recoverable Schedule

Once a transaction T is committed, it should never be necessary to rollback T. The Schedules under this criteria are called recoverable schedules and those do not, are called non-recoverable.

A schedule S is recoverable, if no transaction T in S commits until all transactions T’, that have written an item that T reads, have committed.

(This schedule is not recoverable because T2 made a dirty read and committed before T1, T1 should have committed first.)

The above given schedule is non-recoverable because when the recovery manager rolls back T1 transaction, then X gets its initial value before updation. But T2 has already utilized the wrong value of X that was updated by T1 and T2 committed. Now, database is consequently in an inconsistent state.

Composition of Recoverable

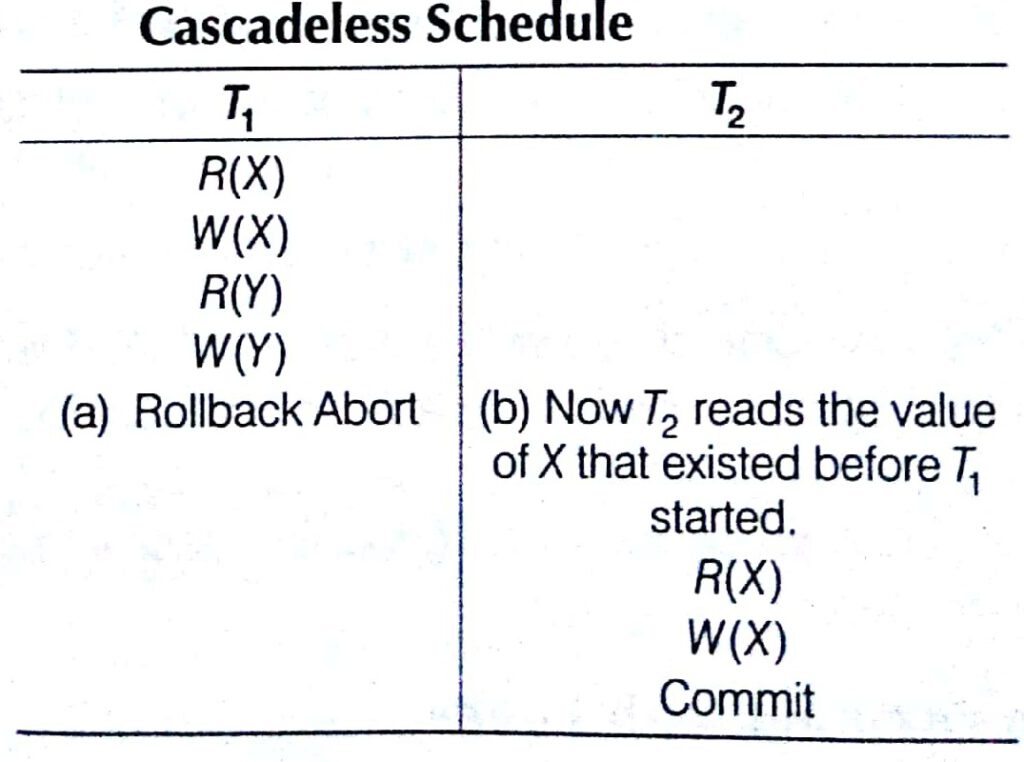

Cascadeless Schedule

These schedules avoid cascading rollbacks. Even, if a schedule is recoverable to recover correctly from failure of transaction 7;, we may have to rollback several transactions. This phenomenon in which a single transaction failure which leads to a series of transaction rollbacks, is called cascading rollbacks.

Cascading rollback is undesirable, since it leads to the undoing of a significant amount of work. It is desirable to restrict the schedules to those,here cascading rollback Cannot occur. Such schedules are called cascadeless schedules.

Strict Schedule

A schedule is strict,

- If overriding of uncommitted data is not allowed.

- Formally, if it satisfies the following conditions

(i)Ti reads a data item X after Tj has terminated (aborted or committed).

(ii) T writes a data item X after Tj has terminated (aborted or committed).

Concurrency Control with Locking

- Previously, we have characterized transaction schedules based on serializability and recoverability.

- If concurrency control with locking technique is used, then locks prevent multiple transactions from accessing the items concurrently.

- Access on data only, it TA ‘has lock’ on data.

- Transactions request and release locks.

- Schedule allows or differs operations based on lock table.

Lock

A lock is a variable associated with a data item, that describes the status of the item with respect to possible operations that can be applied to it. (e.g., read lock, write lock). Locks are used as means of synchronizing the access by concurrent transactions to the database items. There is one lock per database item.

Problems Arising with Locks

There are two problems which are arised when using locks to control the concurrency among transactions

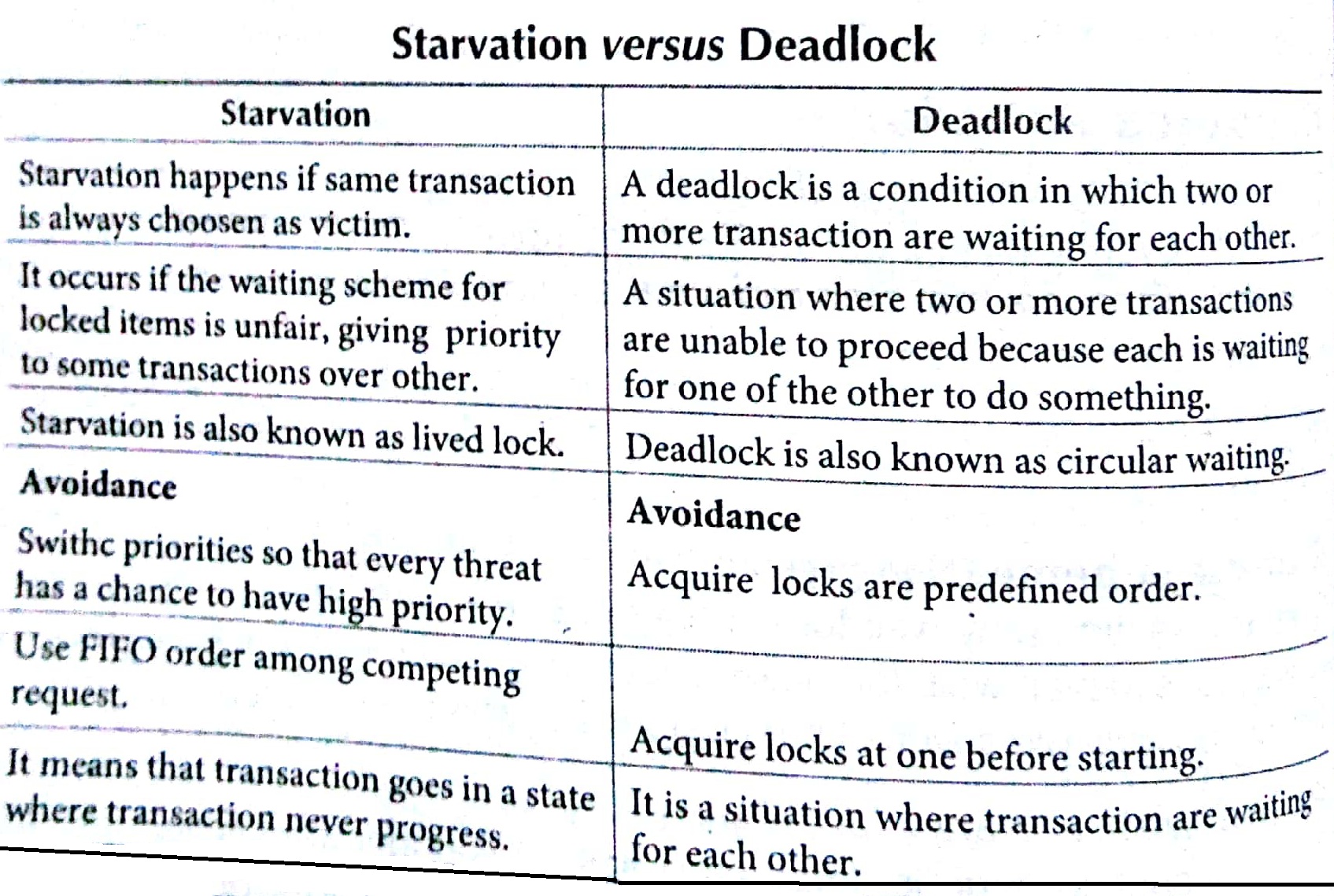

Deadlock :Two or more competing transactions are waiting for each other to complete to obtain a missing lock.

Starvation: A transaction is continually denying the access to a given data item,

Purpose Of Concurrency Control With Locking

- To enforce isolation among conflicting transactions.

- To preserve database consistency.

- To resolve read-write, write-read and write—write conflicts

Locking is an operation that secures

- Permission to read

- Permission to write a data item

Lock (X): Data item X is locked on behalf of the requesting transaction. Unlocking is an operation which removes these permissions from the data item.

Unlock (X): Data item X is made available to all other transaction,

Types of Locks

The two types of Locks are given below

Binary Locks

A binary lock has two states

Locked/Unlocked

If a database item X is locked, then X cannot be accessed by any other database operation.

Shared/ Exclusive Locks (Read/Write Locks)

There are three states

Read locked/Write locked/Unlocked

Several transactions can access the same item X for reading (shared lock), however if any of the transactions want to write the item x, the transaction must acquire an exclusive lock on the item.

Note: Using binary locks or read/write lock, in transactions, does not guarantee serializability of schedules on its own.

Key Points • ””

- Lock unlock are atomic operations (all or nothing).

- If every transaction in a schedule follows the two-phase locking protocol, the schedule is guaranteed to be serializable.

Guaranteeing Serializability by Two-phase Locking

A transaction is said to follow the two-phase locking protocol, if all Locking operation precede the first unlock operation in the transaction.

Such a transaction can be divided into two phases.

Expanding or Growing Phase: During which new locks on items can be acquired but none can be released.

Shrinking Phase: During which existing locks can be released but no new locks can be acquired.

Variations of Two-phase Locking (2PL) Technique

Basic 2PL (2 Phase Locking): Transaction locks data items incrementally. This may cause the problem of deadlock.

Conservative 2PL (Static 2PL): Prevents deadlock by locking all desired data items before transaction begins execution. However, it is difficult to use in practice because of the need to predeclare the read-set and write-set, which is not possible in most situations.

Strict 2PL : A more stricter version of basic algorithm, where unlocking of write lock is performed after a transaction terminates (commits or aborts and rolled back). Hence, no other transaction can read or write an item that is written by transaction.

Rigorous 2PL: Strict 2PL is not deadlock free. A more restrictive variation of strict 2PL, is rigorous 2PL, which also guarantees strict schedule. In this variation, a transaction does not release any of its locks (exclusive or shared) until after it commits or aborts.

Deadlock

A deadlock is a condition in which two or move transaction are waiting for each other deadlock (T1 and T2). An example is given below

Deadlock Prevention

A transaction locks all data items, it refers to before it begins execution. This way of locking prevents deadlock, since a transaction never waits for a Data item. The conservative two-phase locking uses this approach.

Deadlock Detection and Resolution

In this approach, deadlocks are allowed to happen. The scheduler maintains a wait-for-graph for detecting cycle. If a cycle exists, then one transaction involved in the cycle is selected (victim) and rolled back.

Key Points

- A wait-for-graph is created using the lock table. As soon as a transaction is blocked, it is added to the graph.

- When a chain like Ti waits for Tj, Ti waits for TK and Tk waits for Tj or Tj occurs, then this creates a cycle. One of the transaction of the cycle is selected and rolled back.

Deadlock Avoidance

There are many variations of two-phase locking algorithm. Some avoid deadlock- by not lefting the cycle to complete. This is as soon as the algorithm discovers that blocking a transaction is likely to create a cycle, it rolls back the transaction.

Following schemes use transaction times-tamps for the sake of deadlock avoidance

Wait-die scheme (Non-preemptive)

Older transaction may wait for younger one to release data item. Younger transactions never wait for older ones; they are rolled back instead. A transaction may die several times before acquiring the needed data item.

Wound-wait scheme (Preemptive)

older transaction bounds (forces rollback) of younger transaction instead of waiting for it. Younger transactions may wait for older ones. May be fewer rollbacks than wait-die scheme.

Starvation

Starvation occurs when a particular transaction consistently waits or restarted and never gets a chance to proceed further. In a deadlock resolution scheme, it is possible that the same transaction may consistently be selected as victim and rolled back.

Time-stamp Based Concurrency Control Algorithm

This is a different approach that guarantees serializability involves using transaction time-stamps to order transaction execution for an equivalent Serial schedule.

Time-stamp

A time-stamp is a unique identifier created by the DBMS to identify a transaction.

Key Points

- Time-stamp is a monotonically increasing variable (integer) indicating the age of an operation or a transaction.

- A larger time-stamp value indicates a more recent event or operation.

The algorithm associates with each database item X with two Time Stamp (TS) values

Read_TS (X)

The read time stamp of item X; this is the largest time-stamp among time-stamps of transactions that have successfully read item X.

Write_TS (X)

The write time-stamp of item X ; this is the largest time-stamp among all the time-stamps of transactions that have successfully written item X. There are two Time-Stamp based Concurrency Control Algorithm

Basic Time-Stamp Ordering (TO)

Whenever some transaction T tries to issue a R(X) or a W(X) operation, the basic time-stamp ordering algorithm compares the time-stamp order of transaction execution is not violated. If this is violated, then transaction execution is not violated. If this order is violated, then transaction T is aborted. If T is aborted and rolled back, any transaction T1 that may have used a value written by T1 must also be rolled back. Similarly any transaction T2 that may have used a value written by T1 must also be rolled back and so on. This effect is known as cascading rollback.

The concurrency control algorithm must check whether conflicting operations violate the time-stamp ordering in the following two cases.

Transaction T issues a W (X) operation

If read TS (X) > TS (T) or if write TS (X) > TS (T), then an younger transaction has already read the data item, so abort and roll back T and reject the operation.

If the condition in above part does not exist, then execute W(X) of T and set write TS (X) to TS (T).

Transaction T issues a R (X) operation

if write_TS (x) > TS (T), then an younger transaction has already written to the data item, so abort and rollback T and reject the operation.

If write_TS (X) ≤ TS (T), then execute R(X) of T and set read_TS (T) to the larger of TS (T) and the current read=TS (X).

Strict Time–stamp Ordering (TO)

A variation of basic time-stamp ordering called strict time-stamp ordering, ensures that the schedules are both strict (for easy recoverability) and serializable.

Transaction T issues a W(X) operation If TS (T) > read_TS (X), then

Delay T until the transaction T’ that wrote or read X has terminated committed or aborted.

Transaction T issues a R(X) operation If TS (T) > write_TS (X), then

Delay T until the transaction T’ that wrote or read X has terminated committed or aborted.

Thomas’s Write Rule

- If read_TS(X)>TS(T), then abort and rollback T and reject the operation.

- If write_TS(X)>TS(T), then just ignore the write operation and continue execution. This is because the most recent writes counts in case of two consecutive writes. If the conditions given in (i) and (ii) above do not occur, then execute W(X) of T and set write_TS (X) to TS(T).

in this cyberpointsolution sites we are trying to explain each and every concept of Concept of Schedule in DBMS Tutorial Study Material Notes with Example

Multiversion Concurrency Control Techniques

This approach maintains a number of versions of a data item and the allocates the right version to a read operation of a transaction, Thus, unlike other mechanisms a read operation in this mechanism is never rejected,

Side effect Significantly more storage is required to maintain multiple versions.

Validation Based Concurrency Control. Protocol

In this, execution of transaction Ti is done in three phases.

Read and Execution Phase Transaction 7; writes only to temporary local variables.

Validation Phase Transaction 7; performs a validation test to determine, if local variables can be written without violating serializability.

Write Phase If 7; is validated, the updates are applied to the database. otherwise Ti is rolled back.

Note: The above three phases of concurrently executing transactions can be interleaved, but each transaction must go through the three phases that order.

Each transaction Ti; has three time-stamps

Start (Ti) The time when 7; started its execution.

Validation (Ti) The time when 7; entered its validation phase.

Finish (Ti) The time when 7; finished its write phase.

Serializability order is determined by time-stamp given at validation time to) increase concurrency. Thus, TS (Ti) is given the value of validation (T). For all Tj with TS (Ti) < TS (Ti) either one of the following conditions holds

(i)Finish (Ti) < Start (Tj) (ii) Start (7;) < Finish (Ti ) < Validation (Ti )

and the set of data items written by Ti does not intersect with the set of data items read by Tj .Then, validation succeeds and Tj can be committed Otherwise, validation fails and Ti is aborted.

Multiple Granularity

Allow data items to be of various sizes and define a hierarchy of data a hierarchy of data granularities, where the small granularities are nested within larger ones. it can be represented graphically as a tree. When a transaction locks a node in the tree explicitly, it implicitly locks all the nodes descendants in the same node.

Coarse Granularity: The larger the data item size, the lower the degree of concurrency.

Fine Granularity The smaller the data item size, the more locks to be managed and stored and the more lock/unlock operations needed.

Key Points

- Multiple users can access databases and use computer system simultaneously because of the concept of multiprogramming, which allows the computer to execute multiple programs or processes at the same time.

- if the computer system has multiple hardware processors (CPUs), parallel processing of multiple processes is possible.

- While one transaction is waiting for a page to be read from disk, the CPU can Process another transaction. This may increase system throughput (the average number of transactions completed in a given time).

- Interleaved execution of a short transaction with a long transaction allows the short transaction to complete first. This gives improved response time (average time taken to complete a transaction).

- A transaction may be incomplete because the (database) system crashes or because it is aborted by either the system or the user (or application).

- Complete transactions are committed.

- Consistency and isolation are primarily the responsibility of a scheduler. Atomicity and durability are primarily responsibility of recovery manager.

Interleaving

Multiprogramming operating systems execute some commands from one process, then suspend that process and execute some commands from text process and so on. A process is resumed at the point, where it was suspended, whenever it gets its turn to use the CPU again. Hence, this suspended, is actually known as interleaving.

Sorting in Design and Analysis of Algorithm Study Notes with Example

Follow Us On Cyber Point Solution Youtube Channel : Click Here

Follow Us on Social Platforms to get Updated : twiter, facebook, Google Plus

Learn More Ethical Hacking and Cyber Security click on this link. cyber security